I’ve been having this thought on the back of my mind for a long time to write about why it’s often a bad idea to start with microservices for a brand new project.

The time has come, that’s exactly what I’ll talk about in this article.

Microservices are getting natural and we almost feel like we’ve been always living in the world of microservices. Lately when I talked to other developers and asked how they would start a greenfield project, almost certainly the answer was, well, one microservice for this, another one for this, another one for user management, one more for authentication, another for authorization, one more for session management, and the list could go on.

I’m gonna try to shed some light on what nobody is talking about when it comes to microservices. And yeah, it’s gonna be a first-hand experience from a past projects I worked on.

The lie

I collected some of the pros for going microservices other articles are mentioning:

- Fault isolation

- Eliminating the technology lock

- Easier understanding

- Faster deployment

- Scalability

And yeah, these are not false promises in a book but I have to be honest with you, these are not coming easily with your system just because you’re using microservices.

Let me break down each advantage from the list.

Fault isolation. Since the application is consisting of multiple services, if one goes down or something happens to it, only that part of the system will be affected. Think about Netflix, when you’re watching a show, you don’t care about the recommendations. So if they have a service to handle current watchers and provide them with the video stream; and they have another service to handle personal user recommendations. If the recommendation service goes down, the most important functionality in their system will not be affected, i.e. watching shows. The fault is isolated.

Eliminating the technology lock. Think about monoliths. It’s a huge application with hundreds/thousands of APIs, hundreds of database tables are being managed. The app was written in Java and the team spent the last 5 years on developing it. A fancy new language comes out which on paper brings better performance, provides better security, whatever. This could be Go/Rust, and the team wants to experiment with the language and it’s tech stack. How can they do it in a monolith environment? They cannot because it’s a single deployment package. You can just – at least not easily – switch out parts of the app to different languages.

While with microservices, you can use different technology stacks for different services. Service A could be written in Java, service B could be written in Go, service C could be written in Whitespace, if you’re brave enough. 🙂

Easier understanding. When you have multiple services responsible for a smaller fraction of the overall functionality, the service is going to be inherently smaller, hence easier to understand.

Faster deployment. In a regular monolith system, you either deploy completely or you don’t deploy at all. You have one package to be deployed and it’s an all or nothing scenario. With microservices you have the chance to deploy independently, meaning that if you need to deploy an upgrade to the recommendation service (going back to the Netflix example), you can totally deploy that single service and saving up serious time.

Scalability. My all time favorite. You can scale up your services by starting multiple instances to increase the capacity for a particular functionality. As the previous example stands, if people are looking at a lot of recommendations on Netflix, they can easily start multiple instances of the recommendation service to cope with the load. While in a monolith environment, you either scale every single part of your app up or nothing.

Microservices in real life

I’m gonna hit you with hard truth my friend. I’m not saying those advantages cannot be achieved but you, your project, your organization have to work really hard to make those possible.

Infrastructure requirements

Let me start with one of my biggest difficulties with microservices. The infrastructure footprint.

Have you ever deployed a monolith? Of course we can complicate it but in regular cases this is what you’ll need for a monolith if you deploy it to the cloud. Let’s take a simple online store app as an example.

- A load balancer for the app

- A compute instance for running the app

- A (relational) database for the app

- Jenkins for CI(CD)

- Kibana for log aggregation

If you’re going with a microservice:

- A Kubernetes cluster

- A load balancer

- Multiple compute instances for running the app and hosting the K8S cluster

- One or more (relational) databases, depending on whether you’re gonna go with single database per service or not

- A messaging system for service-service communication, e.g. Kafka

- Jenkins for CI(CD)

- Kibana for log aggregation

- Prometheus for monitoring

- Jaeger/Zipkin for distributed tracing

And this is just a high-level overview. This should be fairly clear. Microservices can really bring value to the table, but the question is; at what cost?

Even though the promises sound really good, you have more moving pieces within your architecture which naturally leads to more failure. What if your messaging system breaks? What if there’s an issue with your K8S cluster? What if Jaeger is down and you can’t trace errors? What if metrics are not coming into Prometheus?

Initially you’re going to spend more time (and money for that matter) to build and operate this complex system.

Faster deployments?

I’m gonna touch the first point from the advantage list; faster deployments. When you think about Netflix, Facebook, Twitter and you watch their conference talks when they describe the amount of microservices they’re running and how they can commit something to Git and within the matter of hours it’ll be in production. Is it too good to be true?

It’s definitely achievable in my opinion but I admit I’ve never ever worked on a microservice project like this. I’m not saying it’s not possible, it’s just really hard to get to, both from a stability, infrastructure and cultural point of view.

Let me share how this usually panned out from my experience. Before even doing a single line of coding on a greenfield project, you usually start with some discovery, how the product can be transformed into a technical solution. You design the system, you design your microservices, how many of them are gonna be there, responsibilities, etc.

One really education project was where we did this exercise and we ended up with 80+ microservices in what, 4 months?

What these 80+ microservices meant in reality is that we can definitely deploy faster a single service than putting the 80+ microservices together into a monolith and deploying that, but…

The 80+ microservices were so excessively small that a single development unit – story in the Agile world – was never ever fitting into touching only one service. The system was fundamentally screwed and the promise of faster deployment was gone immediately. We were not having faster deployments but the contrary, slower ones. Much slower.

Plus, and I’ll reflect back to this multiple times. More moving pieces during a deployment means more potential faults. And it happened a lot of times that the infra was not stable enough and deployments randomly failed because

- Artifactory/Nexus/Docker repo was unavailable for a tiny fraction of a second when downloading/uploading packages

- The Jenkins builder randomly got stuck

That’s just one piece of the puzzle. The product has to be decomposed into microservices. Each service should be responsible for its own thing. For example a recommendation-service in the Netflix should be responsible for giving recommendations to users.

Not everything is Netflix and definitely not everything is so easy to decompose into the right size and with the right responsibility. This is where DDD and bounded contexts can help but on one hand it’s not that easy to practice and sometimes there’s not even enough time/openness to play with these things.

The supporting culture

Anyway, in my opinion the second difficulty with microservices is the organizational/project culture. What if the product (department) doesn’t give a damn about the underlying system architecture? I mean shall they?

An example: what if you have a complex architecture with tons of microservices. The Product Owner comes in and says to the team, let’s develop this entire feature. After the team analyzes the feature request, turns out it’s going to touch 10-15 microservices because it’s connected with a lot of other existing features. What do you do in that case?

You try to break it down into smaller pieces but it smells fishy because the feature doesn’t make sense in pieces and it adds a lot of overhead to release it service-by-service. You certainly cannot say to the Product Owner it’s going to take 3-4x time just because we’re using microservices, can you?

How would that conversation look like?

- Product Owner: Hey guys, I came up with this really great feature. Our competitors are already doing it so we gotta do it quickly. Is it possible to do it in 2 weeks?

- Team: Well, by the initial looks of it, yeah, we can do it. And the feature also looks to be a good idea to bring more customers. We’ll regroup and talk it through.

- Team: Okay, slight problem with that 2 weeks. Since we’re doing microservices to be faster, we need more time to implement this thing because we have to touch 15 services, so we’d need like 6 weeks to do the initial implementation.

- Product Owner: Initial implementation?

- Team: Yeah. It’s 15 services for which the communication is critical so the initial implementation won’t include error handling, resilient communication patterns, tracing for debugging purposes and other neat things. For that we’ll need an extra 4 weeks.

- * Product Owner jumps out of the window

Better fault isolation

This one is naturally true. If one service goes down, only that service will go down right?

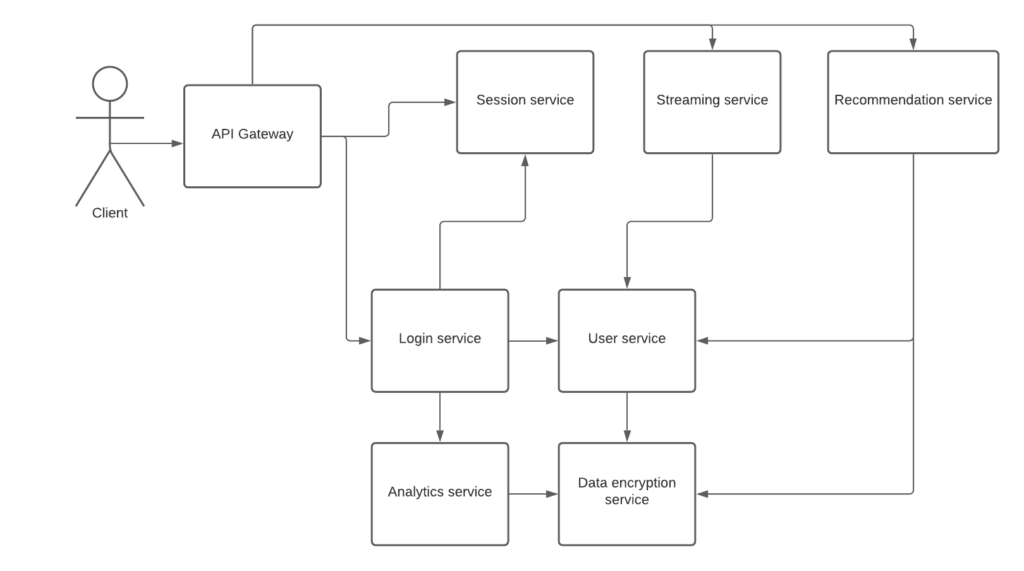

While that’s kinda true, it’s not black and white. Let me show you an imaginary architecture for Netflix – and I’m going to oversimplify it:

Let’s say the user wants to see recommendations. The request goes to the recommendation service and it queries the user data to know details about the user and the stores the recommendations in it’s database (which is not on the picture) and since this is user related data, they might need to encrypt it as well.

Now, what happens if the data encryption service goes down? Can we still do recommendations? Not sure, because we’re unable to encrypt the user’s data, so naturally we’ll say, hey dude, we can’t give you recommendations right now, check back in 5 mins. The fault was affecting the system only until the recommendation service and it gracefully responded with the fact that it’s unable to give recommendations right now.

But do you know how much work you have to do to gracefully handle these kind of situations? A lot.

Let’s take another example. The user tries to log in to the system using the login service. Data encryption service is still failing and the login service is calling the analytics service to have some metrics about how many users are trying to log in within a minute timeframe and some other imaginary metrics. The analytics service though is talking to the data encryption service since this data also needs to be encrypted.

Now, the team who wrote the analytics service was in a rush and didn’t have the time to implement proper error handling, so the issue with the data encryption service circles up to the login service. Apparently the login service has been done months ago and the service is not prepared for handling the underlying error from the analytics service so user logins will be simply rejected even though the non-critical analytics service failed.

And I know what you think. Yeah, the team who implemented the login service was irresponsible for not preparing it for this case but what if they thought the analytics service will handle this gracefully? That was written down in the API contract of the analytics service yet it doesn’t work that way.

So what happens when you’re in a monolith app? A service crashing doesn’t really have a meaning in that context, but assume that for some reason the database table that’s connected to data encryption is inaccessible.

In that case, the error handling will be simple because the only thing you need to prepare for is an exception. Although before praising monoliths too much, there’s the downside, if the monolith goes down, nothing works. So it’s a balance question, but ask yourself. Is it easier to implement a try-catch block or to handle a synchronous HTTP call/async messaging error?

I remember it was an enourmous to standardize error handling with the 80+ microservices and it took months for a team to finish it. And that didn’t even mean introducing error handling everywhere but just rewriting existing errors to a custom library we used so we can reduce the tedious work required for future error handling scenarios.

Takeaway

I’m not finished. See you in chapter 2.

Update: chapter 2 is here, The truth about starting with microservices.

P.S. follow me on Facebook/Twitter if you feel like.